Compute at the constrained edge.

The interesting deep-tech problems are bounded — by power, latency, weight, cost, certification. I have spent a decade designing AI inside those constraints, and that is where I want to keep going deeper.

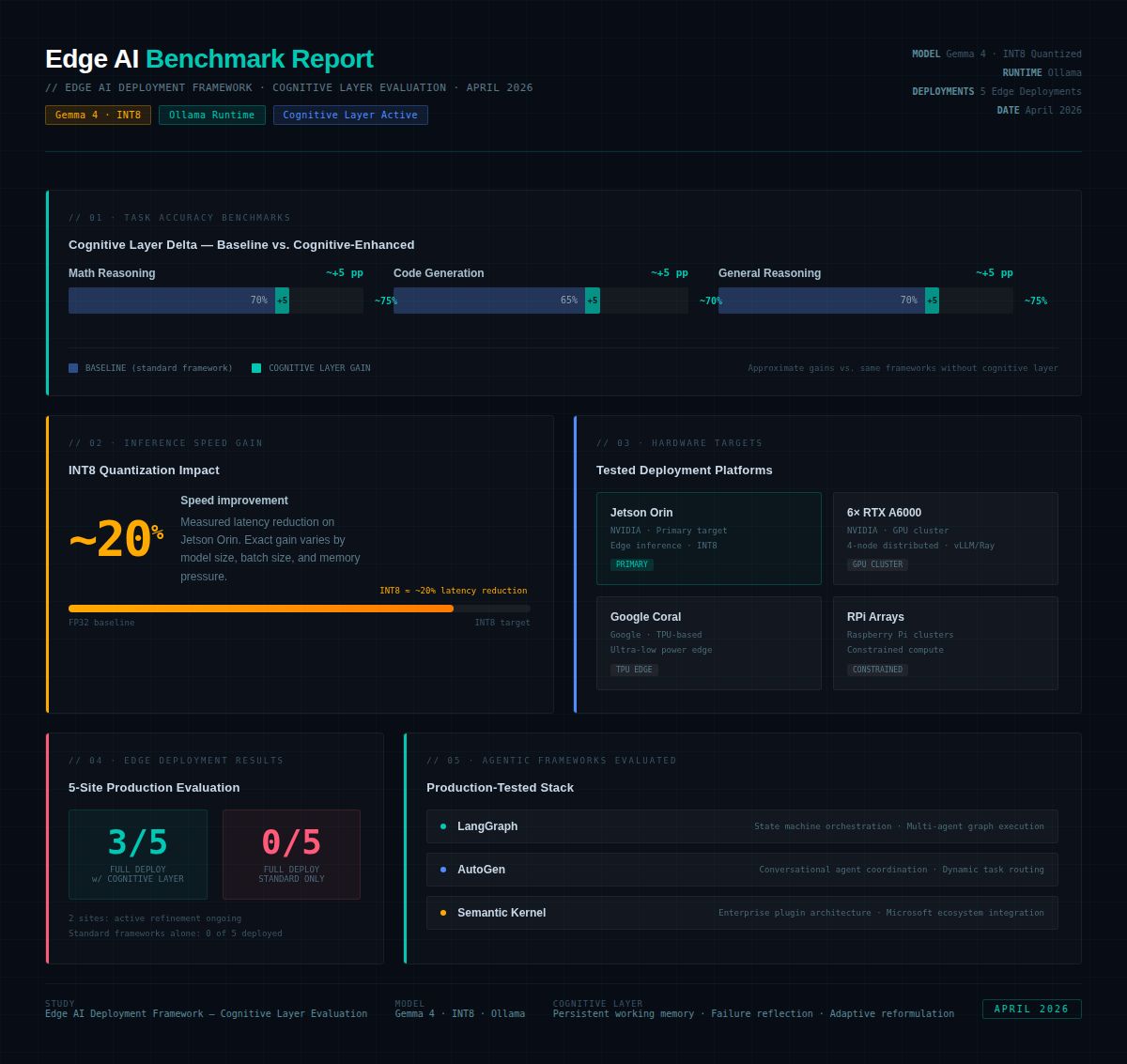

- Mercedes-Benz: INT8/FP16 quantized CV/ML on automotive gateways — ISO 26262 & GDPR compliant onboard ML.

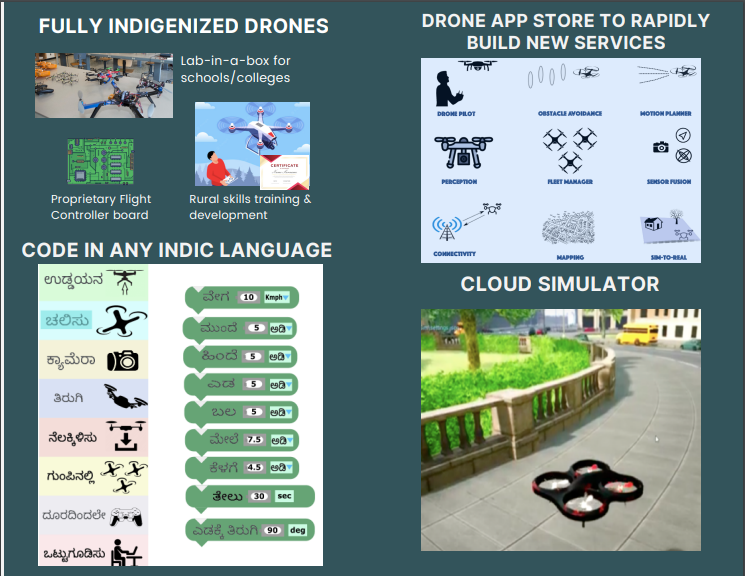

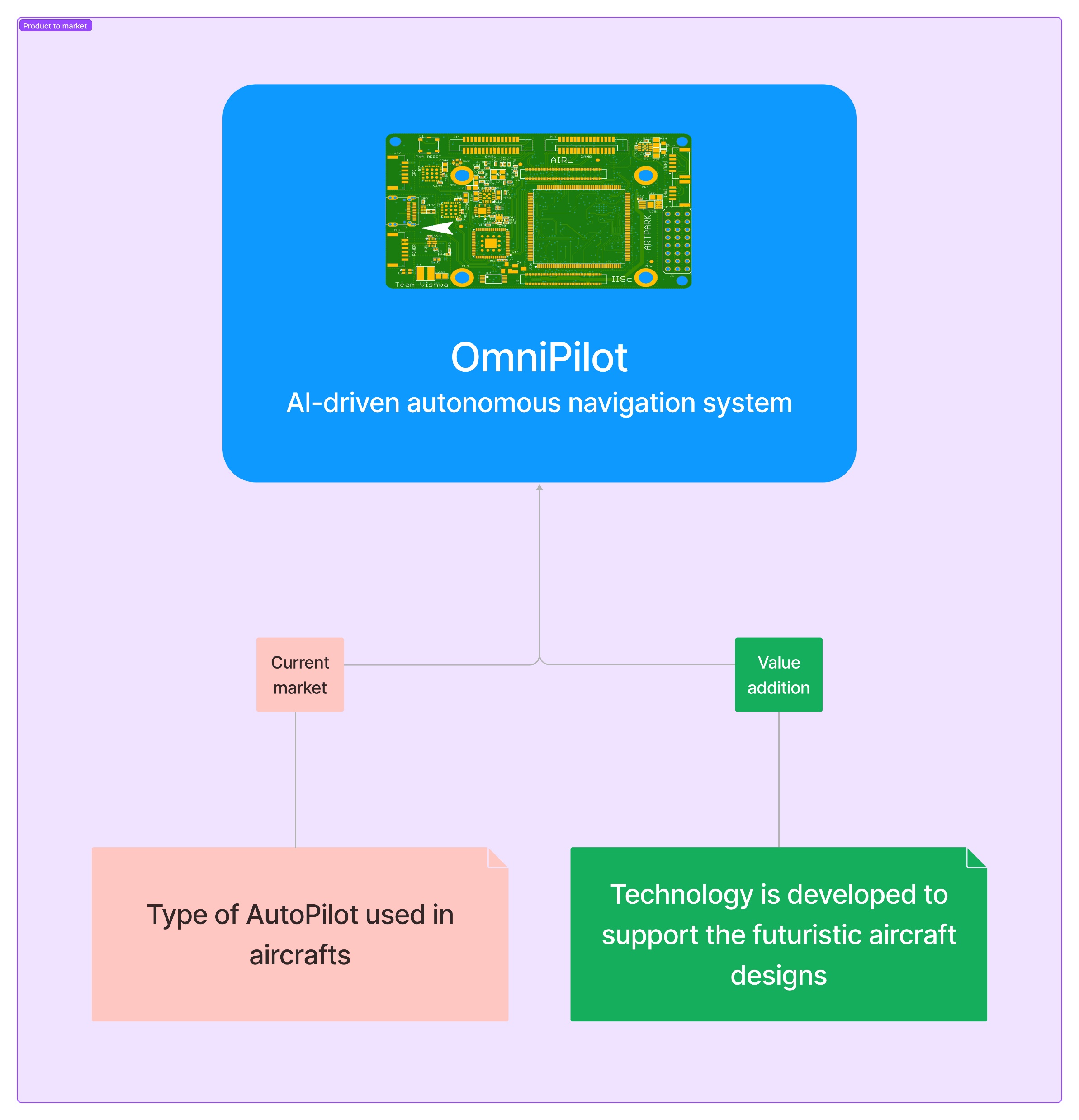

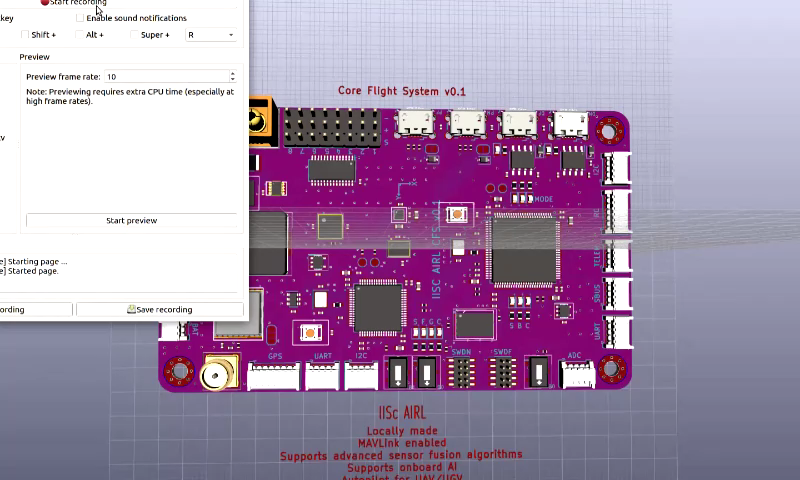

- Vishwa Dynamics: Omnipilot — custom AI flight computer for nano-UAVs; ARM-based edge compute, mission-critical, defense-grade.

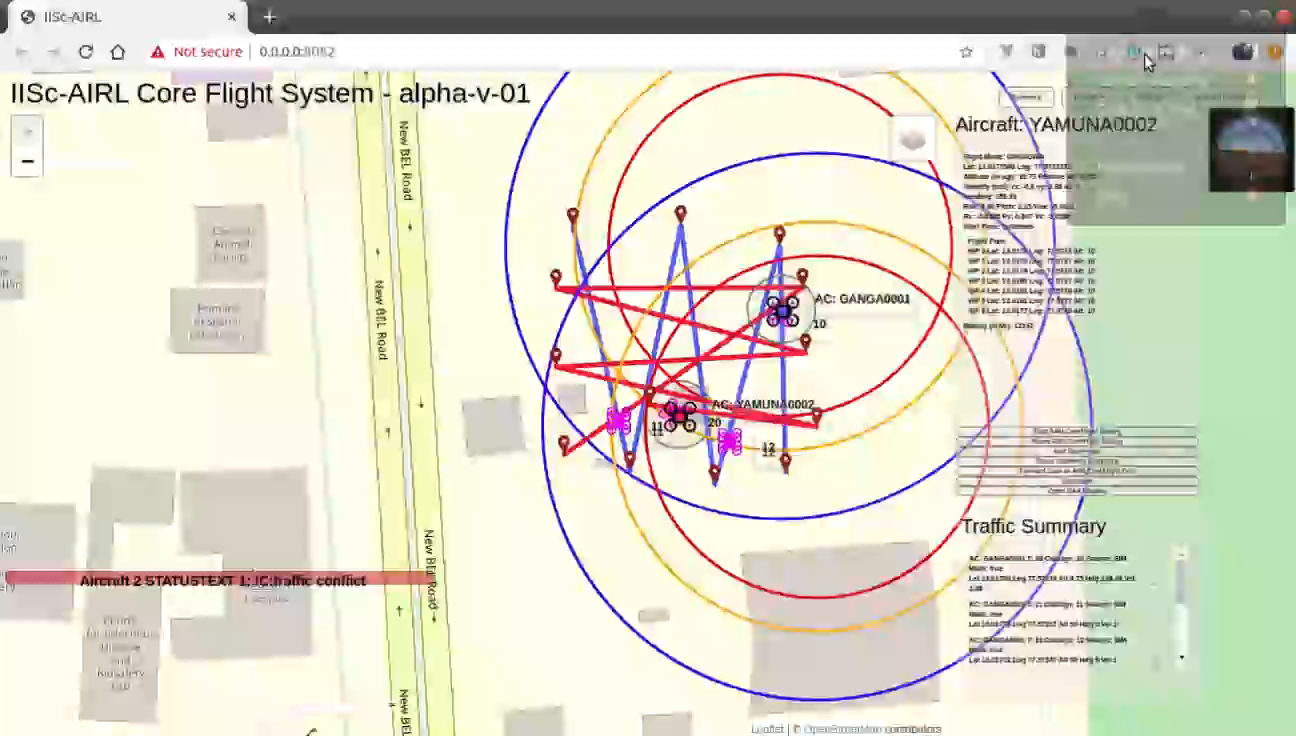

- SwarmX: ARM flight systems with precision landing, deployed across fleets of 5–25 UAVs in regulated environments.

- NTU / Temasek Labs: Pixhawk-based flight computing platform for signal identification in unknown terrain.

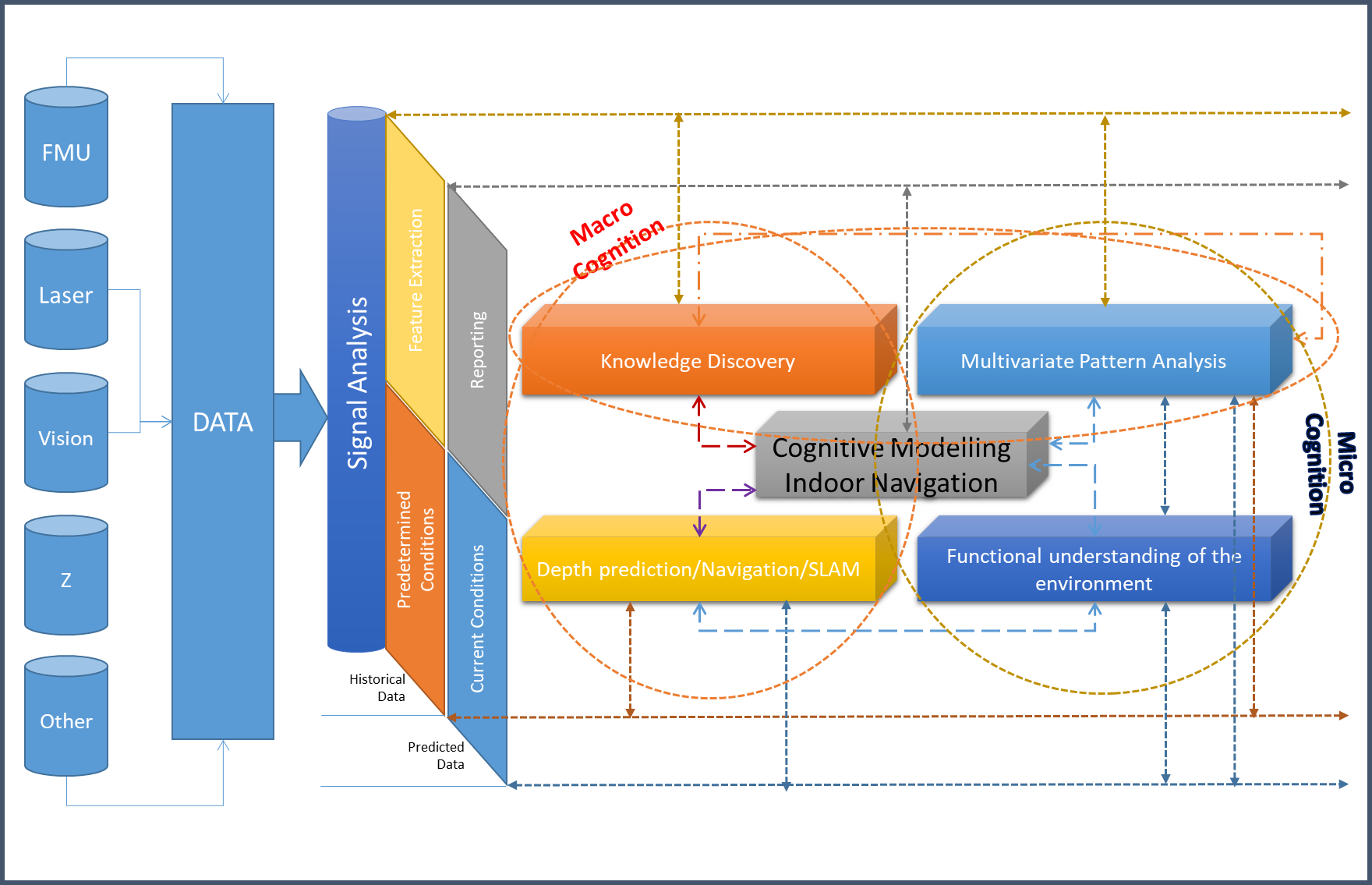

- Republic Polytechnic / Toyota: low-cost ARM navigation explored as a lidar replacement for indoor warehousing UAVs.

- Two granted patents and two filed IP disclosures around edge analytics platforms and intelligent computing for robotics.